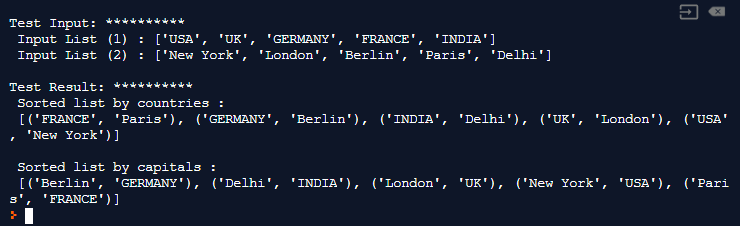

So in order to use this solution on my web server, I must first save the zip file in memory to disk, and then call this function. The problem with using the processor pool is that this requires the original. Total = 0 for future in _completed(futures): The first is the following common functions that simulate the actual operation of the files in the zip file:ĭef unzip_member_f3 (zip_filepath, filename, dest) : with open(zip_filepath, 'rb' ) as f:ĭef f3 (fn, dest) : with open(fn, 'rb' ) as f: The situation is much better this time but I still noticed that the entire decompression process took a huge amount of time. So, after many tests, the solution was to copy these zip files to disk (in the temporary directory/tmp) and then iterate over these files. At first you have 1GB of files in memory, and then you decompress each file now, it will take up about 2-3GB in memory. In various memory explosions and EC2 running out of memory, this method failed heroically. Unusually, each zip file contains 100 files, but 1-3 of them occupy up to 95% of the zip file size.Īt first I tried to decompress files in memory and only processed one file at a time. Most of these files are text files, but there are also some huge binary files.

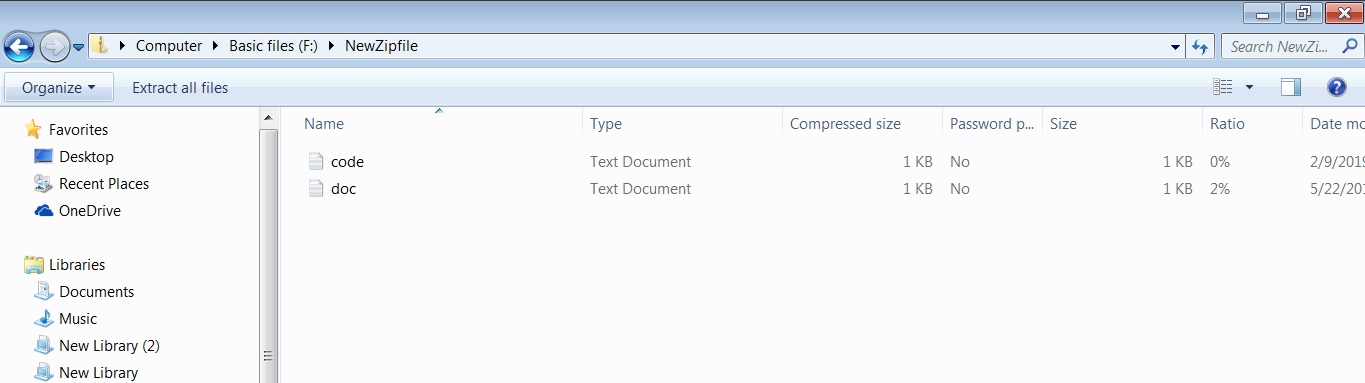

Their average size is 560MB but some of them are larger than 1GB. The challenge is that these zip files are too big. If the file (compared to AWS S3) is different or the file itself is updated, then it is Upload to AWS S3. This special application looks at the name and size of each file, and compares it with the file that has been uploaded to AWS S3.

Suppose the current context (note: context, computer term, here means business scenario) is this: a zip file is uploaded to a web service, and then Python needs to decompress the zip file and then analyze and process each file in it.